Have those warning labels been shown to work like at all? We already have awareness saturation about just how awful cigarettes are for you.

With the government executing this message to our youth, I think they’ll work as well as the anti-piracy ones back in the day.

You Wouldn’t Steal a Car

What do you mean by work? Do they stop everyone from doing stupid things? No. Do they have a measurable effect on behavior? Yes.

So why don’t we put them on guns?

We probably don’t want to use the current leader in cause of death for kids as a template for good policy.

Not at all what I was suggesting. If warning labels save lives why are they not on guns?

My guess is gun advocates think its a restriction on the 2nd amendment?

I see.

Well as long as opinions matter more than data now. Might as well criminalize Tik Tok with one hand and give out free AR-15s to mentally ill 18 year olds with the other.

Warnings probably work better on products you’re putting in your body. If you have blackened lungs on the cigarette packaging I can’t imagine choosing to smoke.

On social media, you basically have to destroy my experience for me to stop using it in the same way. All effective options are terrible: ads, microtransactions, auto-playing unexpected sounds, nonresponsive interfaces.

I don’t get why people think this idea is equivalent to stuff like internet access bans or COPPA, it’s a warning label, not an “enter your ID” to access page.

They never banned cigarettes, but putting a giant warning on the box did help in vilifying cigarettes as very unhealthy and wrong.

I doubt it’ll go anywhere in this age of government, but its exactly the type of thing I would have gone for if I were tasked with solving a societal issue. It’s smart because it has no real effect on access, so social media companies would have a harder time fighting it, but it also gives a big bloody warning which does have a substantial psychological impact on users.

iirc someone did something similar with a very simple “are you sure?” app that gave a prompt asking if you were sure you wanted to post something or send a text. Just having a single prompt was enough for many people to reconsider their stupid text or comment.

Let he who has to deal with that friend who constantly sends blatantly false Xits to them throw the first stone. Honestly I feel like every social media post that makes a factual representation should come with a big flashing warning “THIS IS ALMOST CERTAINLY FALSE, LOOK IT UP BEFORE YOU REPEAT IT YOU DUMMY!”

And I’m only like 10% joking. Given the success of language models it should be moderately trivial to train one to recognize when a factual statement is made and apply the above warning. It’s not even the children and teens I’m worried about. The people who seem to have the most trouble handling this are the adults.

I’m not sure where you’re getting the idea that language models are effective lie detectors, it’s very widely known that LLMs have no concept of truth and hallucinate constantly.

And that’s before we even get into inherent biases and moral judgements required for any form of truth detection.

The point isn’t to have it be a lie detector but a factual claim detector. So you have an neural network that reads statements and says “this thing is saying something factual” or “this is just an opinion/obvious joke/whatever” and a person grades the responses to train it. So then the AI just says “hey this thing is making some sort of fact-related claim” and then the warning applies no matter what.

Given the success of language models it should be moderately trivial to train one to recognize when a factual statement is made and apply the above warning.

Is it??? Because I feel like context is a real weak point for bots and ai to figure out.

Hell, it feels like half the HUMANS don’t know whats factually true. Is the covid vaccine a society saving development which saved the lives of millions? Or is it full of bill gates mind control computer chips to rule over the portion of society dumb enough to get the vaccine willingly?

Who’s to say?

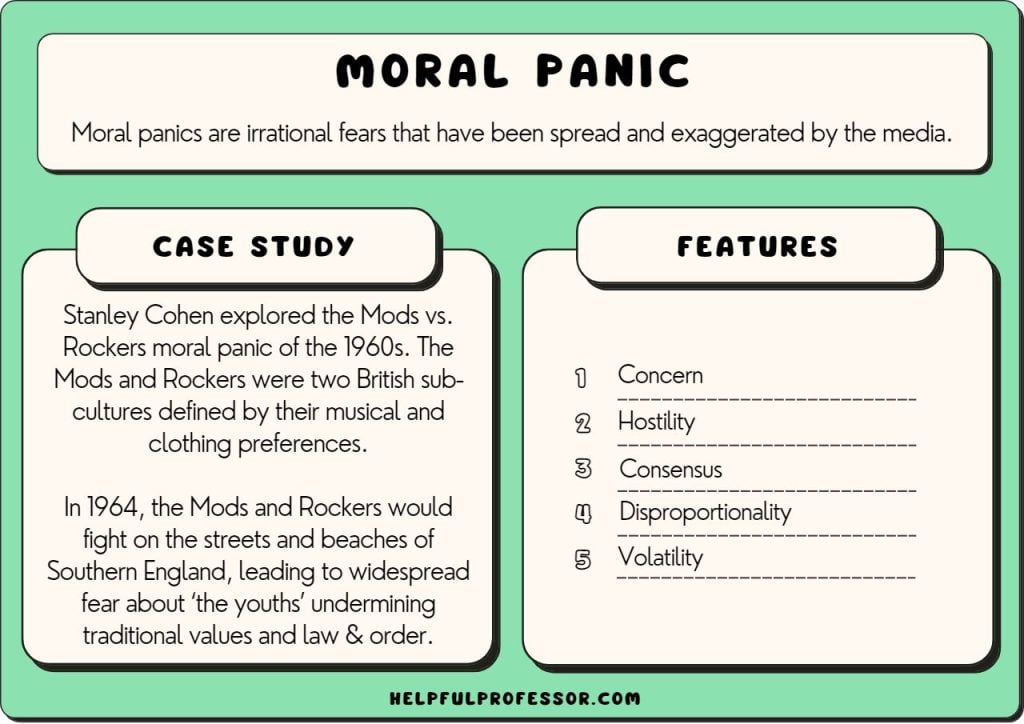

Oh, did they have studies showing that the mods and rockers damaged people’s mental health? Is that how this is the same?

“studies”

Probably with the same methodology that led to comic book burnings

deleted by creator

Go to pubmed. Type “social media mental health”. Read the studies, or the reviews if you don’t have the time.

The average American teenager spends 4.8 hours/day on social media. Increased use of social media is associated with increased rates of depression, eating disorders, body image dissatisfaction, and externalizing problems. These studies don’t show causation, but guess what, we literally cannot show causation in most human studies because of ethics.

Social media drastically alters peer interactions, with negative interactions (bullying) associated with increased rates of self harm, suicide, internalizing and externalizing problems.

Mobile phone use alone is associated with sleep disruption and daytime sleepiness.

Looking forward to your peer-reviewed critiques of these studies claiming they are all “just vibes.”

Kids these days with their new fangled smartphones. Back in my day we made new friends at a lynching or at the sockhop.

Everything after I was 21 is shit!

https://www.cdc.gov/mmwr/volumes/66/wr/mm6630a6.htm

Teenage suicide rates were declining for over a decade, especially in males. Now they are increasing in both males and females. You would have to be a complete monster to not want to study, understand, and reverse this trend.

The homicide rate and suicide rate are inversely correlated. One goes up the other goes down. As a whole the country is getting less violent so this is a predictable result. And it doesn’t require anyone to invent a communist plot to sap and unpurify our precious bodily fluids or gay frogs.

I agree it’s far from ideal. I might suggest that we don’t actively work hard to kill the middle class and maybe stop school shootings. But we won’t do that when it is easier for us to blame Emmanuel Goldste— sorry tik Tok.

deleted by creator

Pretty disingenuous to say this person is acting like an antivaxxer for reading medical journals, when one comment down you admit to forming your opinion by browsing nature, and not being a field expert yourself.

Your comments display hypocrisy and you should commit one way or another.

If you do the search I suggested you will find relevant reviews immediately. If you add keywords based on my post text you will find the primary sources immediately.

No, it’s just based on vibes.

You didn’t bother looking, clearly.

Edit: I’m not saying I’m familiar with what the studies say, although some draw a clear link with adverse mental health impacts on kids. Not sure how far that goes. I’m also not saying I agree with the SG or the need for warning labels, but to say this is based on “vibes” is, ironically, speculative at best.

Tell me you didn’t read the article without telling me.

Tell me you didn’t read the article without telling me.

Why would you conclude that? Because it conflicts with your “vibe”?

Yeah buddy whatever you want to be true is.

You know they are turning the frogs gay? Read about it on my 5G Mark of the Beast Covid microchip

It’s a pity you aren’t worth responding to. Have a nice day!

deleted by creator

So you acknowledge that you don’t have the skills necessary to interpret papers so… what, you decide that Nature adequately represents their findings enough to dismiss them? Even though you say there is little evidence of a causative link? Even though the surgeon general says they feel there is and cites that evidence to back it up?

I mean… what?

If the major psychological/pediatric organizations come out in support of this, I’ll eat my words. [Edit] words: Eaten

https://www.apa.org/news/press/releases/2024/06/social-media-youth

I would interpret the American Academy of Pediatricians stance as being supportive. But that’s open to interpretation, I suppose.

The APA “welcomes” the warning, so I’m eating my words. https://www.apa.org/news/press/releases/2024/06/social-media-youth