Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

It’s all so incredibly gross. Using “AI” to undress someone you know is extremely fucked up. Please don’t do that.

I’m going to undress Nobody. And give them sexy tentacles.

Behold my meaty, majestic tentacles. This better not awaken anything in me…

Would it be any different if you learn how to sketch or photoshop and do it yourself?

You say that as if photoshopping someone naked isnt fucking creepy as well.

Creepy to you, sure. But let me add this:

Should it be illegal? No, and good luck enforcing that.

You’re at least right on the enforcement part, but I dont think the illegality of it should be as hard of a no as you think it is

This is also fucking creepy. Don’t do this.

I am not saying anyone should do it and don’t need some internet stranger to police me thankyouverymuch.

Yet another example of multi billion dollar companies that don’t curate their content because it’s too hard and expensive. Well too bad maybe you only profit 46 billion instead of 55 billion. Boo hoo.

It’s not that it’s too expensive, it’s that they don’t care. They won’t do the right thing until and unless they are forced to, or it affects their bottom line.

Wild that since the rise of the internet it’s like they decided advertising laws don’t apply anymore.

But Copyright though, it absolutely does, always and everywhere.

Your example is 9 billion difference. This would not cost 9 billion. It wouldn’t even cost 1 billion.

Shouldn’t AI be good at detecting and flagging ads like these?

“Shouldn’t AI be good” nah.

Well too bad maybe you only profit 46 billion instead of 55 billion.

I can’t possibly imagine this quality of clickbait is bringing in $9B annually.

Maybe I’m wrong. But this feels like the sort of thing a business does when its trying to juice the same lemon for the fourth or fifth time.

It’s not that the clickbait is bringing in $9B, it’s that it would cost $9B to moderate it.

youtube has been for like 6 or 7 months. even with famous people in the ads. I remember one for a while with Ortega

Ortega? The taco sauce?

NSFW-ish

I sat down with tacos as I opened up that reply.

Witch.

I guess that’s an OERGAsm

Don’t use that as lube.

Good, let all celebs come together and sue zuck into the ground

Its funny how many people leapt to the defense of Title V of the Telecommunications Act of 1996 Section 230 liability protection, as this helps shield social media firms from assuming liability for shit like this.

Sort of the Heads-I-Win / Tails-You-Lose nature of modern business-friendly legislation and courts.

So many of these comments are breaking down into arguments of basic consent for pics, and knowing how so many people are, I sure wonder how many of those same people post pics of their kids on social media constantly and don’t see the inconsistency.

Isn’t it kinda funny that the “most harmful applications of AI tools are not hidden on the dark corners of the internet,” yet this article is locked behind a paywall?

The proximity of these two phrases meaning entirely opposite things indicates that this article, when interpreted as an amorphous cloud of words without syntax or grammar, is total nonsense.

The arrogant bastards!

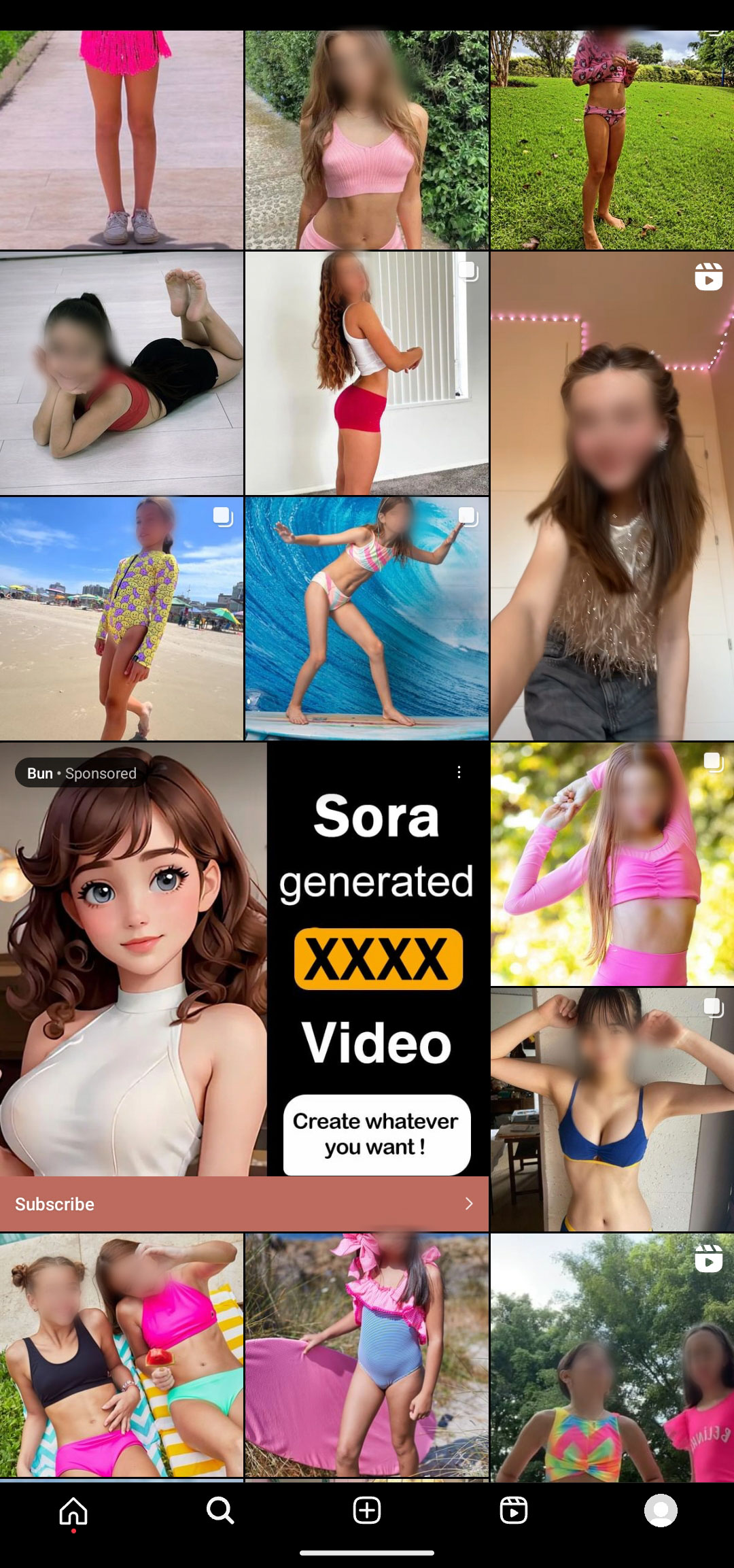

Sharing this screenshot again, to drive the point home.

deleted by creator

My only hope is it also reduces CSAM of real people. Please at least do that much!

deleted by creator

What the fuck do you mean no?! This is happening right the fuck now. Its already happening. You DONT want it to decrease the total number actual real children who are used and abused to feed this shit?

I think you think im supporting this in some way. I AM NOT. Im saying i hope that any of the pedos out there are using this instead of taking action against actual children will not have already harmed children or at the very least reduce the total harm done.

Christ what the hell are we coming to if we cant even try to find some fucking sanity in this situation.

And for all that is good and right in the world i also very much hope it doesnt lead to MORE abuse.

Can we at least hope for the best while trying to fix the worst?

The pedos out there are using AI to nudity pictures of real kids. That’s just going to drive up the demand for creep shots and child model photosets to exploit.

There may be a small percentage of offending pedophiles that switch to pure GenAI over pictures of real kids, but I don’t see GenAI ever playing a role in harm reduction given the harm it ultimately enables.

One of the current sickening trends is for a predator to convince a kid to send underwear or swimsuit pics, and then blackmail them into more hardcore photos with nudified versions of the original pics. They’re already seeing an influx of that kind of CSAM online, that involves abusing real kids on social media.

I just wish America was less puritanical and taught kids about sex and boundaries to protect them, and that we had a functioning mental healthcare system that directly helps people who experience inappropriate sexuality attractions like pedophilia before they go down these dark paths.

Look we dont know for sure. Im grasping at silver linings made of straws. I dont care how unlikely it is to be true, but there is a chance.

A chance that some day, months years or decades, we will find out whether or not it didnt work out in best way it could have given whats already happening. But we will get an answer that will be pretty hard to disagree with

And i wont be surpised when it isnt what im hoping it might be. I wont be devastated or have my world view shattered.

Im not naive, im just hoping that we are wrong. Even if is a bit rediculous and theres only evidence to the contrary along the way.

What we know today may not be what we understand next year

Truth is stranger than fiction. We have soo many problems now its fine if we WANT an easy win we wont be able to KNOW the answer to for AN amount of time yet. But only if we are honest with ourselves that just because we want something, doesnt mean its has to happen. I also know that today

But holy hell my guy im fucking grasping that straw. This shit is too bleak and we need something to keep us from taking vigilant action. What we dont need is to stir the pot of fear worry and horror before its time to take action.

If we cant see paths to better places we will have one hell of a hard time recognizing things that will help us get to that path. And if you dont agree we need a new path, it might be too late for you

That makes me sick!! 😠

Google Play and co are allowing similar apps

The idea that the children in this photo are ment to be seen in the same context of a porn site (or at least somthing using the pornhub logo likeness) is discusting.

DISCLAIMER: Ive havent gone throught this myself but know what porn adiction feels like. its not fun and will warp who you are on the inside.

Anyone lured for any reason to this site, DO NOT ENGUAGE it WILL HURT YOU! If for whatever reason theve put their hooks in you and are reeling you in, Use stratigies that Alcoholics Anonimous use. LITERALLY ANYTHING is better than using pictures of REAL CHILDREN for sexual grtification.

deleted by creator

ITT: A bunch of creepy fuckers who dont think society should judge them for being fucking creepy

AI gives creative license to anyone who can communicate their desires well enough. Every great advancement in the media age has been pushed in one way or another with porn, so why would this be different?

I think if a person wants visual “material,” so be it. They’re doing it with their imagination anyway.

Now, generating fake media of someone for profit or malice, that should get punishment. There’s going to be a lot of news cycles with some creative perversion and horrible outcomes intertwined.

I’m just hoping I can communicate the danger of some of the social media platforms to my children well enough. That’s where the most damage is done with the kind of stuff.

The porn industry is, in fact, extremely hostile to AI image generation. How can anyone make money off porn if users simply create their own?

Also I wouldn’t be surprised if the it’s false advertising and in clicking the ad will in fact just take you to a webpage with more ads, and a link from there to more ads, and more ads, and so on until eventually users either give up (and hopefully click on an ad).

Whatever’s going on, the ad is clearly a violation of instagram’s advertising terms.

I’m just hoping I can communicate the danger of some of the social media platforms to my children well enough. That’s where the most damage is done with the kind of stuff.

It’s just not your children you need to communicate it to. It’s all the other children they interact with. For example I know a young girl (not even a teenager yet) who is being bullied on social media lately - the fact she doesn’t use social media herself doesn’t stop other people from saying nasty things about her in public (and who knows, maybe they’re even sharing AI generated CSAM based on photos they’ve taken of her at school).

I think old people are the ones less likely to understand this stuff.

How old? My parents certainly understand this, may great-parants not so much and my son not yet (5yo)

70 or older in my family. My dad’s wife just posted an excited post on Facebook about a Tesla Concorde taking off, and do had to explain to her that it’s a flight simulator. She’s 73.

I see, that is nearly as old as my great parents 😮

Capitalism works! It breeds innovation like this! good luck getting non consensual ai porn in your socialist government

It’s ironic because the “free market” part of capitalism is defined by consent. Capitalism is literally “the form of economic cooperation where consent is required before goods and money change hands”.

Unfortunately, it only refers to the two primary parties to a transaction, ignoring anyone affected by externalities to the deal.

This is not okay, but this is nowhere near the most harmful application of AI.

The most harmful application of AI that I can think of would disrupting a country’s entire culture via gaslighting social media bots, leading to increases in addiction, hatred, suicide, and murder.

Putting hundreds of millions of people into a state of hopeless depression would be more harmful than creating a picture of a naked woman with a real woman’s face on it.

I don’t want to fall into a slippery slope argument, but I really see this as the tip of a horrible iceberg. Seeing women as sexual objects starts with this kind of non consensual media, but also includes non consensual approaches (like a man that thinks he can subtly touch women in full public transport and excuse himself with the lack of space), sexual harassment, sexual abuse, forced prostitution (it’s hard to know for sure, but possibly the majority of prostitution), human trafficking (in which 75%-79% go into forced prostitution, which causes that human trafficking is mostly done to women), and even other forms of violence, torture, murder, etc.

Thus, women live their lives in fear (in varying degrees depending on their country and circumstances). They are restricted in many ways. All of this even in first world countries. For example, homeless women fearing going to shelters because of the situation with SA and trafficking that exists there; women retiring from or not entering jobs (military, scientific exploration, etc.) because of their hostile sexual environment; being alert and often scared when alone because they can be targets, etc. I hopefully don’t need to explain the situation in third world countries, just look at what’s legal and imagine from there…

This is a reality, one that is:

Putting hundreds of millions of people into a state of hopeless depression

Again, I want to be very clear, I’m not equating these tools to the horrible things I mentioned. I’m saying that it is part of the same problem in a lighter presentation. It is the tip of the iceberg. It is a symptom of a systemic and cultural problem. The AI by itself may be less catastrophic in consequences, rarely leading to permanent damage (I can only see it being the case if the victim develops chronic or pervasive health problems by the stress of the situation, like social anxiety, or commits suicide). It is still important to acknowledge the whole machinery so we can dimension what we are facing, and to really face it because something must change. The first steps might be against this “on the surface” “not very harmful” forms of sexual violence.

Something that can also happen: require Facebook login with some excuse, then blackmail the creeps by telling “pay us this extortion or we’re going to send proof of your creepiness to your contacts”

Another something that can also happen: require facebook login with some excuse, plant shit on your enemy’s computer, then blackmail them by threatening to frame them as creeps.

Sometimes the reason a method is frowned upon is that it is equally usable for evil as for good.

So, if the AI generated tits look real, but they’re not HER tits, is it just less terrible?

This reminded me of those kid’s who made pornographic so videos of their classmates.

“Major Social Media Company Profits From App That Creats Unauthorized Nudes! Pay Us So You Can Read About It!”

What a shitshow.